PDF version:

Global Highlights in Neuroengineering 2005-2018 – Logan Thrasher Collins

Optogenetic stimulation using ChR2

(Boyden, Zhang, Bamberg, Nagel, & Deisseroth, 2005)

- Ed S. Boyden, Karl Deisseroth, and colleagues developed optogenetics, a revolutionary technique for stimulating neural activity.

- Optogenetics involves engineering neurons to express light-gated ion channels. The first channel used for this purpose was ChR2 (a protein originally found in bacteria which responds to blue light). In this way, a neuron exposed to an appropriate wavelength of light will be stimulated.

- Over time, optogenetics has gained a place as an essential experimental tool for neuroscientists across the world. It has been expanded upon and improved in numerous ways and has even allowed control of animal behavior via implanted fiber optics and other light sources. Optogenetics may eventually be used in the development of improved brain-computer interfaces.

Blue Brain Project cortical column simulation

(Markram, 2006)

- In the early stages of the Blue Brain Project, ~ 10,000 neurons were mapped in 2-week-old rat somatosensory neocortical columns with sufficient resolution to show rough spatial locations of the dendrites and synapses.

- After constructing a virtual model, algorithmic adjustments refined the spatial connections between neurons to increase accuracy (over 10 million synapses).

- The cortical column was emulated using the Blue Gene/L supercomputer and the emulation was highly accurate compared to experimental data.

Optogenetic silencing using halorhodopsin

(Han & Boyden, 2007)

- Ed Boyden continued developing optogenetic tools to manipulate neural activity. Along with Xue Han, he expressed a codon-optimized version of a bacterial halorhodopsin (along with the ChR2 protein) in neurons.

- Upon exposure to yellow light, halorhodopsin pumps chloride ions into the cell, hyperpolarizing the membrane and inhibiting neural activity.

- Using halorhodopsin and ChR2, neurons could be easily activated and inhibited using yellow and blue light respectively.

Brainbow

(Livet et al., 2007)

- Lichtman and colleagues used Cre/Lox recombination tools to create genes which express a randomized set of three or more differently-colored fluorescent proteins (XFPs) in a given neuron, labeling the neuron with a unique combination of colors. About ninety distinct colors were emitted across a population of genetically modified neurons.

- The detailed structures within neural tissue equipped with the Brainbow system can be imaged much more easily since neurons can be distinguished via color contrast.

- As a proof-of-concept, hundreds of synaptic contacts and axonal processes were reconstructed in a selected volume of the cerebellum. Several other neural structures were also imaged using Brainbow.

- The fluorescent proteins expressed by the Brainbow system are usable in vivo.

High temporal precision optogenetics

(Gunaydin et al., 2010)

- Karl Deisseroth, Peter Hegemann, and colleagues used protein engineering to improve the temporal resolution of optogenetic stimulation.

- Glutamic acid at position 123 in ChR2 was mutated to threonine, producing a new ion channel protein (dubbed ChETA).

- The ChETA protein allows for induction of spike trains with frequencies up to 200 Hz and greatly decreases the incidence of unintended spikes. Furthermore, ChETA eliminates plateau potentials (a phenomenon which interferes with precise control of neural activity).

Hippocampal prosthesis in rats

(Berger et al., 2012)

- Theodore Berger and his team developed an artificial replacement for neurons which transmit information from the CA3 region to the CA1 region of the hippocampus.

- This cognitive prosthesis employs recording and stimulation electrodes along with a multi-input multi-output (MIMO) model to encode the information in CA3 and transfer it to CA1.

- The hippocampal prosthesis was shown to restore and enhance memory in rats as evaluated by behavioral testing and brain imaging.

In vivo superresolution microscopy for neuroimaging

(Berning, Willig, Steffens, Dibaj, & Hell, 2012)

- Stefan Hell (2014 Nobel laureate in chemistry) developed stimulated emission depletion microscopy (STED), a type of superresolution fluorescence microscopy which allows imaging of synapses and dendritic spines.

- STED microscopy uses transgenic neurons that express fluorescent proteins. The neurons exhibit fluctuations in their fluorescence over time, providing temporal contrast enhancement to the resolution. Although light’s wavelength would ordinarily limit the resolution (the diffraction limit), STED’s temporal contrast overcomes this limitation.

- Neurons in transgenic mice (equipped with glass-sealed holes in their skulls) were imaged using STED. Synapses and dendritic spines were observed up to fifteen nanometers below the surface of the brain tissue.

Eyewire: crowdsourcing method for retina mapping

(Marx, 2013)

- The Eyewire project was created by Sebastian Seung’s research group. It is a crowdsourcing initiative for connectomic mapping within the retina towards uncovering neural circuits involved in visual processing.

- Laboratories first collect data via serial electron microscopy as well as functional data from two-photon microscopy.

- In the Eyewire game, images of tissue slices are provided to players who then help reconstruct neural morphologies and circuits by “coloring in” the parts of the images which correspond to cells and stacking many images on top of each other to generate 3D maps. Artificial intelligence tools help provide initial “best guesses” and guide the players, but the people ultimately perform the task of reconstruction.

- By November 2013, around 82,000 participants had played the game. Its popularity continues to grow.

The BRAIN Initiative

(“Fact Sheet: BRAIN Initiative,” 2013)

- The BRAIN Initiative (Brain Research through Advancing Innovative Technologies) provided neuroscientists with $110 million in governmental funding and $122 million in funding from private sources such as the Howard Hughes Medical Institute and the Allen Institute for Brain Science.

- The BRAIN Initiative focused on funding research which develops and utilizes new technologies for functional connectomics. It helped to accelerate research on tools for decoding the mechanisms of neural circuits in order to understand and treat mental illness, neurodegenerative diseases, and traumatic brain injury.

- The BRAIN Initiative emphasized collaboration between neuroscientists and physicists. It also pushed forward nanotechnology-based methods to image neural tissue, record from neurons, and otherwise collect neurobiological data.

The CLARITY method for making brains translucent

(Chung & Deisseroth, 2013)

- Karl Deisseroth and colleagues developed a method called CLARITY to make samples of neural tissue optically translucent without damaging the fine cellular structures in the tissue. Using CLARITY, entire mouse brains have been turned transparent.

- Mouse brains were infused with hydrogel monomers (acrylamide and bisacrylamide) as well as formaldehyde and some other compounds for facilitating crosslinking. Next, the hydrogel monomers were crosslinked by incubating the brains at 37°C. Lipids in the hydrogel-stabilized mouse brains were extracted using hydrophobic organic solvents and electrophoresis.

- CLARITY allows antibody labeling, fluorescence microscopy, and other optically-dependent techniques to be used for imaging entire brains. In addition, it renders the tissue permeable to macromolecules, which broadens the types of experimental techniques that these samples can undergo (i.e. macromolecule-based stains, etc.)

Telepathic rats engineered using hippocampal prosthesis

Telepathic rats engineered using hippocampal prosthesis

(S. Deadwyler et al., 2013)

- Berger’s hippocampal prosthesis was implanted in pairs of rats. When “donor” rats were trained to perform a task, they developed neural representations (memories) which were recorded by their hippocampal prostheses.

- The donor rat memories were run through the MIMO model and transmitted to the stimulation electrodes of the hippocampal prostheses implanted in untrained “recipient” rats. After receiving the memories, the recipient rats showed significant improvements on the task that they had not been trained to perform.

Integrated Information Theory 3.0

(Oizumi, Albantakis, & Tononi, 2014)

- Integrated information theory (IIT) was originally proposed by Giulio Tononi in 2004. IIT is a quantitative theory of consciousness which may help explain the hard problem of consciousness.

- IIT begins by assuming the following phenomenological axioms; each experience is characterized by how it differs from other experiences, an experience cannot be reduced to interdependent parts, and the boundaries which distinguish individual experiences are describable as having defined “spatiotemporal grains.”

- From these phenomenological axioms and the assumption of causality, IIT identifies maximally irreducible conceptual structures (MICS) associated with individual experiences. MICS represent particular patterns of qualia that form unified percepts.

- IIT also outlines a mathematical measure of an experience’s quantity. This measure is called integrated information or ϕ.

Expansion Microscopy

(F. Chen, Tillberg, & Boyden, 2015)

- The Boyden group developed expansion microscopy, a method which enlarges neural tissue samples (including entire brains) with minimal structural distortions and so facilitates superior optical visualization of the scaled-up neural microanatomy. Furthermore, expansion microscopy greatly increases the optical translucency of treated samples.

- Expansion microscopy operates by infusing a swellable polymer network into brain tissue samples along with several chemical treatments to facilitate polymerization and crosslinking and then triggering expansion via dialysis in water. With 4.5-fold enlargement, expansion microscopy only distorts the tissue by about 1% (computed using a comparison between control superresolution microscopy of easily-resolvable cellular features and the expanded version).

- Before expansion, samples can express various fluorescent proteins to facilitate superresolution microscopy of the enlarged tissue once the process is complete. Furthermore, expanded tissue is highly amenable to fluorescent stains and antibody-based labels.

Japan’s Brain/MINDS project

(Okano, Miyawaki, & Kasai, 2015)

- In 2014, the Brain/MINDS (Brain Mapping by Integrated Neurotechnologies for Disease Studies) project was initiated to further neuroscientific understanding of the brain. This project received nearly $30 million in funding for its first year alone.

- Brain/MINDS focuses on studying the brain of the common marmoset (a non-human primate abundant in Japan), developing new technologies for brain mapping, and understanding the human brain with the goal of finding new treatments for brain diseases.

Openworm

(Szigeti et al., 2014)

- The anatomical elegans connectome was originally mapped in 1976 by Albertson and Thomson. More data has since been collected on neurotransmitters, electrophysiology, cell morphology, and other characteristics.

- Szigeti, Larson, and their colleagues made an online platform for crowdsourcing research on elegans computational neuroscience, with the goal of completing an entire “simulated worm.”

- The group also released software called Geppetto, a program that allows users to manipulate both multicompartmental Hodgkin-Huxley models and highly efficient soft-body physics simulations (for modeling the worm’s electrophysiology and anatomy).

The TrueNorth Chip from DARPA and IBM

(Akopyan et al., 2015)

- The TrueNorth neuromorphic computing chip was constructed and validated by DARPA and IBM. TrueNorth uses circuit modules which mimic neurons. Inputs to these fundamental circuit modules must overcome a threshold in order to trigger “firing.”

- The chip can emulate up to a million neurons with over 250 million synapses while requiring far less power than traditional computing devices.

Human Brain Project cortical mesocircuit reconstruction and simulation

(Markram et al., 2015)

- The HBP digitally reconstructed a 0.29 mm3 region of rat cortical tissue (~ 31,000 neurons and 37 million synapses) based on morphological data, “connectivity rules,” and additional datasets. The cortical mesocircuit was emulated using the Blue Gene/Q supercomputer.

- This emulation was sufficiently accurate to reproduce emergent neurological processes and yield insights on the mechanisms of their computations.

Neural lace

(Liu et al., 2015)

- Charles Lieber’s group developed a syringe-injectable electronic mesh made of submicrometer-thick wiring for neural interfacing.

- The meshes were constructed using novel soft electronics for biocompatibility. Upon injection, the neural lace expands to cover and record from centimeter-scale regions of tissue.

- Neural lace may allow for “invasive” brain-computer interfaces to circumvent the need for surgical implantation. Lieber has continued to develop this technology towards clinical application.

Expansion FISH

(F. Chen et al., 2016)

- Boyden, Chen, Marblestone, Church, and colleagues combined fluorescent in situ hybridization (FISH) with expansion microscopy to image the spatial localization of RNA in neural tissue.

- The group developed a chemical linker to covalently attach intracellular RNA to the infused polymer network used in expansion microscopy. This allowed for RNAs to maintain their relative spatial locations within each cell post-expansion.

- After the tissue was enlarged, FISH was used to fluorescently label targeted RNA molecules. In this way, RNA localization was more effectively resolved.

- As a proof-of-concept, expansion FISH was used to reveal the nanoscale distribution of long noncoding RNAs in nuclei as well as the locations of RNAs within dendritic spines.

Neural dust

(Seo et al., 2016)

- Michel Maharbiz’s group invented implantable, ~ 1 mm biosensors for wireless neural recording and tested them in rats.

- This neural dust could be miniaturized to less than 0.5 mm or even to microscale dimensions using customized electronic components.

- Neural dust motes consist of two recording electrodes, a transistor, and a piezoelectric crystal.

- The neural dust received external power from ultrasound. Neural signals were recorded by measuring disruptions to the piezoelectric crystal’s reflection of the ultrasound waves. Signal processing mathematics allowed precise detection of activity.

The China Brain Project

(Poo et al., 2016)

- The China Brain Project was launched to help understand the neural mechanisms of cognition, develop brain research technology platforms, develop preventative and diagnostic interventions for brain disorders, and to improve brain-inspired artificial intelligence technologies.

- This project will be take place from 2016 until 2030 with the goal of completing mesoscopic brain circuit maps.

- China’s population of non-human primates and preexisting non-human primate research facilities give the China Brain Project an advantage. The project will focus on studying rhesus macaques.

Somatosensory cortex stimulation for spinal cord injuries

(Flesher et al., 2016)

- Gaunt, Flesher, and colleagues found that microstimulation of the primary somatosensory cortex (S1) partially restored tactile sensations to a patient with a spinal cord injury.

- Electrode arrays were implanted into the S1 regions of a patient with a spinal cord injury. The array performed intracortical microstimulation over a period of six months.

- The patient reported locations and perceptual qualities of the sensations elicited by microstimulation. The patient did not experience pain or “pins and needles” from any of the stimulus trains. Overall, 93% of the stimulus trains were reported as “possibly natural.”

- Results from this study might be used to engineer upper-limb neuroprostheses which provide somatosensory feedback.

Hippocampal prosthesis in monkeys

(S. A. Deadwyler et al., 2017)

- Theodore Berger continued developing his cognitive prosthesis and tested it in Rhesus Macaques.

- As with the rats, monkeys with the implant showed substantially improved performance on memory tasks.

The $100 billion Softbank Vision Fund

(Lomas, 2017)

- Masayoshi Son, the CEO of Softbank (a Japanese telecommunications corporation), announced a plan to raise $100 billion in venture capital to invest in artificial intelligence. This plan involved partnering with multiple large companies in order to raise this enormous amount of capital.

- By the end of 2017, the Vision Fund successfully reached its $100 billion goal. Masayoshi Son has since announced further plans to continue raising money with a new goal of over $800 billion.

- Masayoshi Son’s reason for these massive investments is the Technological Singularity. He agrees with Kurzweil that the Singularity will likely occur at around 2045 and he hopes to help bring the Singularity to fruition. Though Son is aware of the risks posed by artificial superintelligence, he feels that superintelligent AI’s potential to tackle some of humanity’s greatest challenges (such as climate change and the threat of nuclear war) outweighs those risks.

Bryan Johnson launches Kernel

(Regalado, 2017)

- Entrepreneur Bryan Johnson invested $100 million to start Kernel, a neurotechnology company.

- Kernel plans to develop implants that allow for recording and stimulation of large numbers of neurons at once. The company’s initial goal is to develop treatments for mental illnesses and neurodegenerative diseases. Its long-term goal is to enhance human intelligence.

- Kernel originally partnered with Theodore Berger and intended to utilize his hippocampal prosthesis. Unfortunately, Berger and Kernel parted ways after about six months because Berger’s vision was reportedly too long-range to support a financially viable company (at least for now).

- Kernel was originally a company called Kendall Research Systems. This company was started by a former member of the Boyden lab. In total, four members of Kernel’s team are former Boyden lab members.

Elon Musk launches NeuraLink

(Etherington, 2017)

- Elon Musk (CEO of Tesla, SpaceX, and a number of other successful companies) initiated a neuroengineering venture called NeuraLink.

- NeuraLink will begin by developing brain-computer interfaces (BCIs) for clinical applications, but the ultimate goal of the company is to enhance human cognitive abilities in order to keep up with artificial intelligence.

- Though many of the details around NeuraLink’s research are not yet open to the public, it has been rumored that injectable electronics similar to Lieber’s neural lace might be involved.

Facebook announces effort to build brain-computer interfaces

(Constine, 2017)

- Facebook revealed research on constructing non-invasive brain-computer interfaces (BCIs) at a company-run conference in 2017. The initiative is run by Regina Dugan, Facebook’s head of R&D at division building 8.

- Facebook’s researchers are working on a non-invasive BCI which may eventually enable users to type one hundred words per minute with their thoughts alone. This effort builds on past investigations which have been used to help paralyzed patients.

- The building 8 group is also developing a wearable device for “skin hearing.” Using just a series of vibrating actuators which mimic the cochlea, test subjects have so far been able to recognize up to nine words. Facebook intends to vastly expand this device’s capabilities.

DARPA funds research to develop improved brain-computer interfaces

(Hatmaker, 2017)

- The U.S. government agency DARPA awarded $65 million in total funding to six research groups.

- The recipients of this grant included five academic laboratories (headed by Arto Nurmikko, Ken Shepard, Jose-Alain Sahel and Serge Picaud, Vicent Pieribone, and Ehud Isacoff) and one small company called Paradromics Inc.

- DARPA’s goal for this initiative is to develop a nickel-sized bidirectional brain-computer interface (BCI) which can record from and stimulate up to one million individual neurons at once.

Human Brain Project analyzes brain computations using algebraic topology

(Reimann et al., 2017)

- Investigators at the Human Brain Project utilized algebraic topology to analyze the reconstructed ~ 31,000 neuron cortical microcircuit from their earlier work.

- The analysis involved representing the cortical network as a digraph, finding directed cliques (complete directed subgraphs belonging to a digraph), and determining the net directionality of information flow (by computing the sum of the squares of the differences between in-degree and out-degree for all the neurons in a clique). In algebraic topology, directed cliques of n neurons are called directed simplices of dimension n-1.

- Vast numbers of high-dimensional directed cliques were found in the cortical microcircuit (as compared to null models and other controls). Spike correlations between pairs of neurons within a clique were found to increase with the clique’s dimension and with the proximity of the neurons to the clique’s sink. Furthermore, topological metrics allowed insights into the flow of neural information among multiple cliques.

- Experimental patch-clamp data supported the significance of the findings. In addition, similar patterns were found within the C. elegans connectome, suggesting that the results may generalize to nervous systems across species.

Early testing of hippocampal prosthesis algorithm in humans

(Song, She, Hampson, Deadwyler, & Berger, 2017)

- Dong Song (who was working alongside Berger) tested the MIMO algorithm on human epilepsy patients using implanted recording and stimulation electrodes. The full hippocampal prosthesis was not implanted, but the electrodes acted similarly, though in a temporary capacity. Although only two patients were tested in this study, many trials were performed to compensate for the small sample size.

- Hippocampal spike trains from individual cells in CA1 and CA3 were recorded from the patients during a delayed match-to-sample task. The patients were shown various images while neural activity data were recorded by the electrodes and processed by the MIMO model. The patients were then asked to recall which image they had been shown previously by picking it from a group of “distractor” images. Memories encoded by the MIMO model were used to stimulate hippocampal cells during the recall phase.

- In comparison to controls in which the same two epilepsy patients were not assisted by the algorithm and stimulation, the experimental trials demonstrated a significant increase in successful pattern matching.

Brain imaging factory in China

(Cyranoski, 2017)

- Qingming Luo started the HUST-Suzhou Institute for Brainsmatics, a brain imaging “factory.” Each of the numerous machines in Luo’s facility performs automated processing and imaging of tissue samples. The devices make ultrathin slices of brain tissue using diamond blades, treat the samples with fluorescent stains or other contrast-enhancing chemicals, and image then using fluorescence microscopy.

- The institute has already demonstrated its potential by mapping the morphology of a previously unknown neuron which “wraps around” the entire mouse brain.

Automated patch-clamp robot for in vivo neural recording

(Suk et al., 2017)

- Ed S. Boyden and colleagues developed a robotic system to automate patch-clamp recordings from individual neurons. The robot was tested in vivo using mice and achieved a data collection yield similar to that of skilled human experimenters.

- By continuously imaging neural tissue using two-photon microscopy, the robot can adapt to a target cell’s movement and shift the pipette to compensate. This adaptation is facilitated by a novel algorithm called an imagepatching algorithm. As the pipette approaches its target, the algorithm adjusts the pipette’s trajectory based on the real-time two-photon microscopy.

- The robot can be used in vivo so long as the target cells express a fluorescent marker or otherwise fluoresce corresponding to their size and position.

Genome editing in the mammalian brain

(Nishiyama, Mikuni, & Yasuda, 2017)

- Precise genome editing in the brain has historically been challenging because most neurons are postmitotic (non-dividing) and the postmitotic state prevents homology-directed repair (HDR) from occurring. HDR is a mechanism of DNA repair which allows for targeted insertions of DNA fragments with overhangs homologous to the region of interest (by contrast, non-homologous end-joining is highly unpredictable).

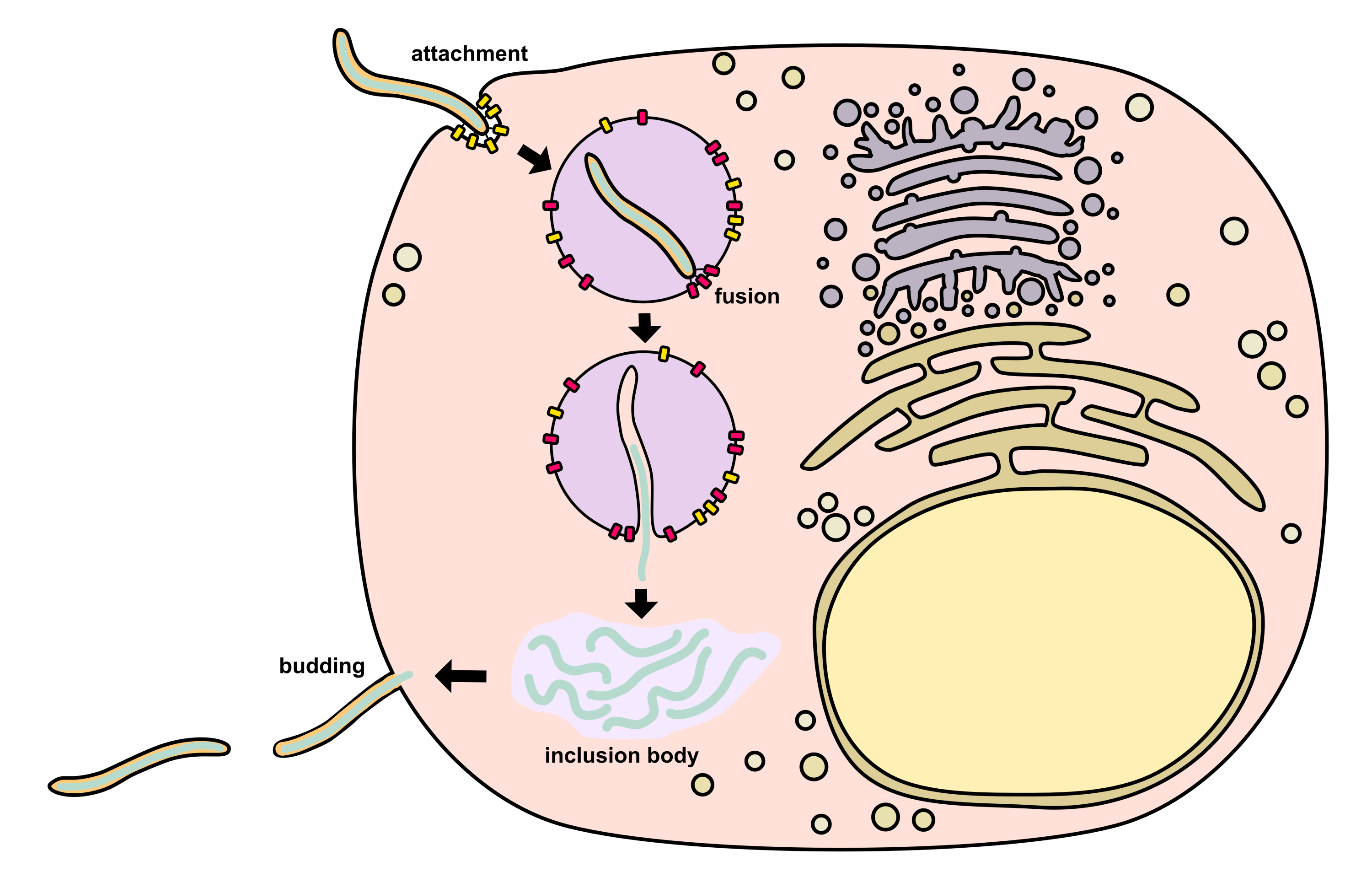

- Nishiyama, Mikuni, and Yasuda developed a technique which allows genome editing in postmitotic mammalian neurons using adeno-associated viruses (AAVs) and CRISPR-Cas9.

- The AAVs delivered ssDNA sequences encoding a single guide RNA (sgRNA) and an insert. Inserts encoding a hemagglutinin tag (HA) and inserts encoding EGFP were both tested. Cas9 was encoded endogenously by transgenic host cells and in transgenic host animals.

- The technique achieved precise genome editing in vitro and in vivo with a low rate of off-target effects. Inserts did not cause deletion of nearby endogenous sequences for 98.1% of infected neurons.

Near-infrared light and upconversion nanoparticles for optogenetic stimulation

(S. Chen et al., 2018)

- Upconversion nanoparticles absorb two or more low-energy photons and emit a higher energy photon. For instance, multiple near-infrared photons can be converted into a single visible spectrum photon.

- Shuo Chen and colleagues injected upconversion nanoparticles into the brains of mice and used them to convert externally applied near-infrared (NIR) light into visible light within the brain tissue. In this way, optogenetic stimulation was performed without the need for surgical implantation of fiber optics or similarly invasive procedures.

- The authors demonstrated stimulation via upconversion of NIR to blue light (to activate ChR2) and inhibition via upconversion of NIR to green light (to activate a rhodopsin called Arch).

- As a proof-of-concept, this technology was used to alter the behavior of the mice by activating hippocampally-encoded fear memories.

Map of all neuronal cell bodies within mouse brain

(Murakami et al., 2018)

- Ueda, Murakami, and colleagues combined methods from expansion microscopy and CLARITY to develop a protocol called CUBIC-X which both expands and clears entire brains. Light-sheet fluorescence microscopy was used to image the treated brains and a novel algorithm was developed to detect individual nuclei.

- Although expansion microscopy causes some increased tissue transparency on its own, CUBIC-X greatly improved this property in the enlarged tissues, facilitating more detailed whole-brain imaging.

- Using CUBIC-X, the spatial locations of all the cell bodies (but not dendrites, axons, or synapses) within the mouse brain were mapped. This process was performed upon several adult mouse brains as well as several developing mouse brains to allow for comparative analysis.

- The authors made the spatial atlas publicly available in order to facilitate global cooperation towards annotating connectivity among the neural cell bodies within the atlas.

Clinical testing of hippocampal prosthesis algorithm in humans

(Hampson et al., 2018)

- Further clinical tests of Berger’s hippocampal prosthesis were performed. Twenty-one patients took part in the experiments. Seventeen patients underwent CA3 recording so as to facilitate training and optimization of the MIMO model. Eight patients received CA1 stimulation so as to improve their memories.

- Electrodes with the ability to record from single neurons (10-24 single-neuron recording sites) and via EEG (4-6 EEG recording sites) were implanted such that recording and stimulation could occur at CA3 and CA1 respectively.

- Patients performed behavioral memory tasks. Both short-term and long-term memory showed an average improvement of 35% across the patients who underwent stimulation.

Precise optogenetic manipulation of fifty neurons

(Mardinly et al., 2018)

- Mardinly and colleagues engineered a novel excitatory optogenetic ion channel called ST-ChroME and a novel inhibitory optogenetic ion channel called IRES-ST-eGtACR1. The channels were localized to the somas of host neurons and generated stronger photocurrents over shorter timescales than previously existing opsins, allowing for powerful and precise optogenetic stimulation and inhibition.

- 3D-SHOT is an optical technique in which light is tuned by a device called a spatial light modulator along with several other optical components. Using 3D-SHOT, light was precisely projected upon targeted neurons within a volume of 550×550×100 μm3.

- By combining novel optogenetic ion channels and the 3D-SHOT technique, complex patterns of neural activity were created in vivo with high spatial and temporal precision.

- Simultaneously, calcium imaging allowed measurement of the induced neural activity. More custom optoelectronic components helped avoid optical crosstalk of the fluorescent calcium markers with the photostimulating laser.

Whole-brain Drosophila connectome data acquired via serial electron microscopy

(Zheng et al., 2018)

- Zheng, Bock, and colleagues collected serial electron microscopy data on the entire adult Drosophila connectome, providing the data necessary to reconstruct a complete structural map of the fly’s brain at the resolution of individual synapses, dendritic spines, and axonal processes.

- The data are in the form of 7050 transmission electron microscopy images (187500 x 87500 pixels and 16 GB per image), each representing a 40nm-thin slice of the fly’s brain. In total the dataset requires 106 TB of storage.

- Although much of the the data still must be processed to reconstruct a 3-dimensional map of the Drosophila brain, the authors did create 3-dimensional reconstructions of selected areas in the olfactory pathway of the fly. In doing so, they discovered a new cell type as well as several other previously unrealized insights about the organization of Drosophila’s olfactory biology.

References

Akopyan, F., Sawada, J., Cassidy, A., Alvarez-Icaza, R., Arthur, J., Merolla, P., … Modha, D. S. (2015). TrueNorth: Design and Tool Flow of a 65 mW 1 Million Neuron Programmable Neurosynaptic Chip. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems, 34(10), 1537–1557. http://doi.org/10.1109/TCAD.2015.2474396

Berger, T. W., Song, D., Chan, R. H. M., Marmarelis, V. Z., LaCoss, J., Wills, J., … Granacki, J. J. (2012). A Hippocampal Cognitive Prosthesis: Multi-Input, Multi-Output Nonlinear Modeling and VLSI Implementation. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 20(2), 198–211. http://doi.org/10.1109/TNSRE.2012.2189133

Berning, S., Willig, K. I., Steffens, H., Dibaj, P., & Hell, S. W. (2012). Nanoscopy in a Living Mouse Brain. Science, 335(6068), 551 LP-551. Retrieved from http://science.sciencemag.org/content/335/6068/551.abstract

Boyden, E. S., Zhang, F., Bamberg, E., Nagel, G., & Deisseroth, K. (2005). Millisecond-timescale, genetically targeted optical control of neural activity. Nature Neuroscience, 8, 1263. Retrieved from http://dx.doi.org/10.1038/nn1525

Chen, F., Tillberg, P. W., & Boyden, E. S. (2015). Expansion microscopy. Science, 347(6221), 543 LP-548. Retrieved from http://science.sciencemag.org/content/347/6221/543.abstract

Chen, F., Wassie, A. T., Cote, A. J., Sinha, A., Alon, S., Asano, S., … Boyden, E. S. (2016). Nanoscale imaging of RNA with expansion microscopy. Nature Methods, 13, 679. Retrieved from http://dx.doi.org/10.1038/nmeth.3899

Chen, S., Weitemier, A. Z., Zeng, X., He, L., Wang, X., Tao, Y., … McHugh, T. J. (2018). Near-infrared deep brain stimulation via upconversion nanoparticle–mediated optogenetics. Science, 359(6376), 679 LP-684. Retrieved from http://science.sciencemag.org/content/359/6376/679.abstract

Chung, K., & Deisseroth, K. (2013). CLARITY for mapping the nervous system. Nature Methods, 10, 508. Retrieved from http://dx.doi.org/10.1038/nmeth.2481

Constine, J. (2017). Facebook is building brain-computer interfaces for typing and skin-hearing. TechCrunch. Retrieved from https://techcrunch.com/2017/04/19/facebook-brain-interface/

Cyranoski, D. (2017). China launches brain-imaging factory. Nature, 548(7667), 268–269. http://doi.org/10.1038/548268a

Deadwyler, S. A., Hampson, R. E., Song, D., Opris, I., Gerhardt, G. A., Marmarelis, V. Z., & Berger, T. W. (2017). A cognitive prosthesis for memory facilitation by closed-loop functional ensemble stimulation of hippocampal neurons in primate brain. Experimental Neurology, 287, 452–460. http://doi.org/https://doi.org/10.1016/j.expneurol.2016.05.031

Deadwyler, S., Hampson, R., Sweat, A., Song, D., Chan, R., Opris, I., … Berger, T. (2013). Donor/recipient enhancement of memory in rat hippocampus. Frontiers in Systems Neuroscience. Retrieved from https://www.frontiersin.org/article/10.3389/fnsys.2013.00120

Etherington, D. (2017). Elon Musk’s Neuralink wants to boost the brain to keep up with AI. TechCrunch. Retrieved from techcrunch.com/2017/03/27/elon-musks-neuralink-wants-to-boost-the-brain-to-keep-up-with-ai/

Fact Sheet: BRAIN Initiative. (2013). Retrieved from https://obamawhitehouse.archives.gov/the-press-office/2013/04/02/fact-sheet-brain-initiative

Flesher, S. N., Collinger, J. L., Foldes, S. T., Weiss, J. M., Downey, J. E., Tyler-Kabara, E. C., … Gaunt, R. A. (2016). Intracortical microstimulation of human somatosensory cortex. Science Translational Medicine. Retrieved from http://stm.sciencemag.org/content/early/2016/10/12/scitranslmed.aaf8083.abstract

Gunaydin, L. A., Yizhar, O., Berndt, A., Sohal, V. S., Deisseroth, K., & Hegemann, P. (2010). Ultrafast optogenetic control. Nature Neuroscience, 13, 387. Retrieved from http://dx.doi.org/10.1038/nn.2495

Hampson, R. E., Song, D., Robinson, B. S., Fetterhoff, D., Dakos, A. S., Roeder, B. M., … Deadwyler, S. A. (2018). Developing a hippocampal neural prosthetic to facilitate human memory encoding and recall. Journal of Neural Engineering, 15(3), 36014. http://doi.org/10.1088/1741-2552/aaaed7

Han, X., & Boyden, E. S. (2007). Multiple-Color Optical Activation, Silencing, and Desynchronization of Neural Activity, with Single-Spike Temporal Resolution. PLOS ONE, 2(3), e299. Retrieved from https://doi.org/10.1371/journal.pone.0000299

Hatmaker, T. (2017). DARPA awards $65 million to develop the perfect, tiny two-way brain-computer interface. TechCrunch. Retrieved from techcrunch.com/2017/07/10/darpa-nesd-grants-paradromics/

Liu, J., Fu, T.-M., Cheng, Z., Hong, G., Zhou, T., Jin, L., … Lieber, C. M. (2015). Syringe-injectable electronics. Nature Nanotechnology, 10, 629. Retrieved from http://dx.doi.org/10.1038/nnano.2015.115

Livet, J., Weissman, T. A., Kang, H., Draft, R. W., Lu, J., Bennis, R. A., … Lichtman, J. W. (2007). Transgenic strategies for combinatorial expression of fluorescent proteins in the nervous system. Nature, 450, 56. Retrieved from http://dx.doi.org/10.1038/nature06293

Lomas, N. (2017). Superintelligent AI explains Softbank’s push to raise a $100BN Vision Fund. TechCrunch. Retrieved from https://techcrunch.com/2017/02/27/superintelligent-ai-explains-softbanks-push-to-raise-a-100bn-vision-fund/

Mardinly, A. R., Oldenburg, I. A., Pégard, N. C., Sridharan, S., Lyall, E. H., Chesnov, K., … Adesnik, H. (2018). Precise multimodal optical control of neural ensemble activity. Nature Neuroscience, 21(6), 881–893. http://doi.org/10.1038/s41593-018-0139-8

Markram, H. (2006). The Blue Brain Project. Nature Reviews Neuroscience, 7, 153. Retrieved from http://dx.doi.org/10.1038/nrn1848

Markram, H., Muller, E., Ramaswamy, S., Reimann, M. W., Abdellah, M., Sanchez, C. A., … Schürmann, F. (2015). Reconstruction and Simulation of Neocortical Microcircuitry. Cell, 163(2), 456–492. http://doi.org/10.1016/j.cell.2015.09.029

Marx, V. (2013). Neuroscience waves to the crowd. Nature Methods, 10, 1069. Retrieved from http://dx.doi.org/10.1038/nmeth.2695

Murakami, T. C., Mano, T., Saikawa, S., Horiguchi, S. A., Shigeta, D., Baba, K., … Ueda, H. R. (2018). A three-dimensional single-cell-resolution whole-brain atlas using CUBIC-X expansion microscopy and tissue clearing. Nature Neuroscience, 21(4), 625–637. http://doi.org/10.1038/s41593-018-0109-1

Nishiyama, J., Mikuni, T., & Yasuda, R. (2017). Virus-Mediated Genome Editing via Homology-Directed Repair in Mitotic and Postmitotic Cells in Mammalian Brain. Neuron, 96(4), 755–768.e5. http://doi.org/10.1016/j.neuron.2017.10.004

Oizumi, M., Albantakis, L., & Tononi, G. (2014). From the Phenomenology to the Mechanisms of Consciousness: Integrated Information Theory 3.0. PLOS Computational Biology, 10(5), e1003588. Retrieved from https://doi.org/10.1371/journal.pcbi.1003588

Okano, H., Miyawaki, A., & Kasai, K. (2015). Brain/MINDS: brain-mapping project in Japan. Philosophical Transactions of the Royal Society of London. Series B, Biological Sciences, 370(1668). http://doi.org/10.1098/rstb.2014.0310

Poo, M., Du, J., Ip, N. Y., Xiong, Z.-Q., Xu, B., & Tan, T. (2016). China Brain Project: Basic Neuroscience, Brain Diseases, and Brain-Inspired Computing. Neuron, 92(3), 591–596. http://doi.org/10.1016/j.neuron.2016.10.050

Regalado, A. (2017). The Entrepreneur with the $100 Million Plan to Link Brains to Computers. MIT Technology Review. Retrieved from https://www.technologyreview.com/s/603771/the-entrepreneur-with-the-100-million-plan-to-link-brains-to-computers/

Reimann, M. W., Nolte, M., Scolamiero, M., Turner, K., Perin, R., Chindemi, G., … Markram, H. (2017). Cliques of Neurons Bound into Cavities Provide a Missing Link between Structure and Function. Frontiers in Computational Neuroscience. Retrieved from https://www.frontiersin.org/article/10.3389/fncom.2017.00048

Seo, D., Neely, R. M., Shen, K., Singhal, U., Alon, E., Rabaey, J. M., … Maharbiz, M. M. (2016). Wireless Recording in the Peripheral Nervous System with Ultrasonic Neural Dust. Neuron, 91(3), 529–539. http://doi.org/10.1016/j.neuron.2016.06.034

Song, D., She, X., Hampson, R. E., Deadwyler, S. A., & Berger, T. W. (2017). Multi-resolution multi-trial sparse classification model for decoding visual memories from hippocampal spikes in human. In 2017 39th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC) (pp. 1046–1049). http://doi.org/10.1109/EMBC.2017.8037006

Suk, H.-J., van Welie, I., Kodandaramaiah, S. B., Allen, B., Forest, C. R., & Boyden, E. S. (2017). Closed-Loop Real-Time Imaging Enables Fully Automated Cell-Targeted Patch-Clamp Neural Recording In Vivo. Neuron, 95(5), 1037–1047.e11. http://doi.org/https://doi.org/10.1016/j.neuron.2017.08.011

Szigeti, B., Gleeson, P., Vella, M., Khayrulin, S., Palyanov, A., Hokanson, J., … Larson, S. (2014). OpenWorm: an open-science approach to modeling Caenorhabditis elegans. Frontiers in Computational Neuroscience. Retrieved from https://www.frontiersin.org/article/10.3389/fncom.2014.00137

Zheng, Z., Lauritzen, J. S., Perlman, E., Robinson, C. G., Nichols, M., Milkie, D., … Bock, D. D. (2018). A Complete Electron Microscopy Volume of the Brain of Adult Drosophila melanogaster. Cell, 174(3), 730–743.e22. http://doi.org/10.1016/j.cell.2018.06.019